In this blog, Michael Thomas discusses the potential impact of generative AI tools such as ChatGPT on education.

Generative artificial intelligence, such as ChatGPT, is a form of AI that can generate human-like text based on a ‘large language model’ – information extracted from what is out on the internet. It can write essays and summarise facts, it can give feedback on written work and Excel formulae. There are versions that can generate other types of content, such as images from text, or music. I used DALL:E to generate the above image in response to the text prompt “draw a photorealistic picture of a university administrator thinking very hard about artificial intelligence” (I added the text!). Together, generative AI represents an immensely powerful tool.

In education, one of the principal methods of encouraging conceptual learning, developing writing skills, and assessing knowledge, is to ask students to independently write essays. However, students are now increasingly using generative AI in their work (see, e.g., this recent article from the BBC: ‘Most of our friends use AI in schoolwork‘). This is causing concern among educators and parents alike.

For educators, generative AI represents a significant challenge. Can teachers no longer use essays as an educational tool? Has a principal form of assessment been lost? Generative AI is immensely powerful but it has limitations: it generates plausible, ‘high probability’ text, not necessarily factually correct text, and the content it generates can be biased based on what the AI has found on the internet. Are students using a tool that leads them astray?

Like search engines, generative AI cannot be uninvented. Instead, students should be guided on how best to use generative AI to support their learning. But right now, students frequently know more about what generative AI can do than educators.

CEN Director Michael Thomas recently attended a meeting of the All Parliamentary Party Group (APPG) on Artificial Intelligence at the UK House of Lords, convened to discuss the potential impact (for better or worse) of generative artificial intelligence on education. He wrote a report of the meeting for the education think tank Learnus. The 3-page report can be found here.

Here are the main points from the report of the House of Lords meeting:

1. No one was panicking that AI robots were going to take over the world – although everyone recognised the downside risks of generative AI (e.g., inaccurate and biased content, age-inappropriate content, commercial ownership, data privacy). Instead, the main focus was on opportunities.

2. Among experts, there was a diverse range of views expressed on what tools like ChatGPT mean for education – all the way from ‘that don’t impress me’ to ‘it’s a steppingstone to utopia’. Some thought it on a par with the introduction of calculators to maths class, or of search engines for researching essays and projects: a helpful tool, necessitating some tweaking of teaching practice, but not much more. Others thought it would fundamentally alter educational practices and was an opportunity to democratise education – a tool to provide support for all.

3. The kids currently know much more than the teachers – pretty much everyone agreed that the most important first step is to improve teacher literacy on generative AI, to understand what these systems can (and can’t) do, and to begin to think about how they may be used. Perhaps the most important take-home for teachers and students alike is that you’ve got to know the limitations of the technology.

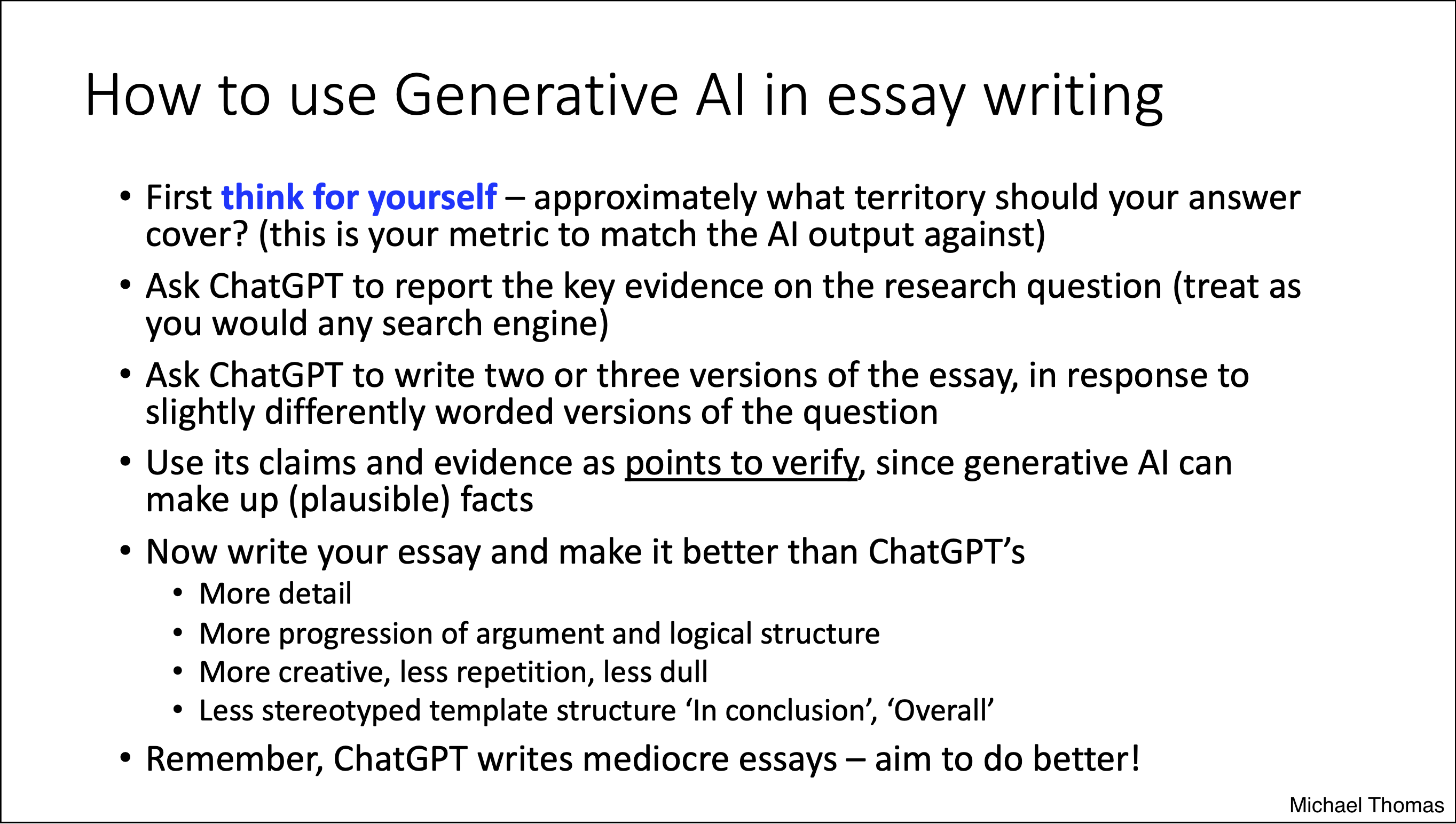

4. Guidance is beginning to emerge – institutions are thinking hard about the educational impact of generative AI, and some guidance is beginning to emerge (e.g., from the UK Department for Education and from the Russell Group of UK universities). As an example, this term, I gave a lecture to university psychology students on how they might use ChatGPT as a tool in their essay writing. I let them know what the chances are of getting caught if they simply use it to write their assessments (given that universities use AI detection tools, and that ChatGPT essays are reasonably easy to spot for content experts); and I also told them the very mediocre mark they would likely receive for an AI generated essay even if they didn’t get caught – because ChatGPT doesn’t write great essays. Here’s a slide summarising some tips:

There are many ways generative AI can be useful in education: to suggest initial ideas, to give feedback on text, to help second language learners improve their writing, for checking and recommending Excel formulae or computer code.

There are inevitably pitfalls we need to avoid (mostly linked to ensuring that content is unbiased and factually true, and that creativity is not stifled – ChatGPT will encourage you to write just like everyone else on the internet!).

But the broad message should be a positive one. In the same way that the invention of search engines gave everyone unprecedented access to vast stores of human knowledge (but ‘knowledge’ not to treated uncritically), generative AI can empower learners. The search is on for the best guidance to allow students to realise the potential of this new tool and avoid its pitfalls.

Did ChatGPT just ruin education? No, it gave education a powerful new tool, but with an instruction manual yet to be written.